It’s hard to get clear, objective data about the overall state of self-service technology. Case studies about specific projects are plentiful, but, as the saying goes, “the plural of anecdote is not data.” In addition, you always have to account for bias in that reporting: Vendors are going to highlight the data that makes their technology look good. Projects that succeed will be touted, and projects that fail get buried quietly.

If we want hard answers, we have to get a little more clever. Data released last week allows us to do just that.

Average Call Duration Trending Up

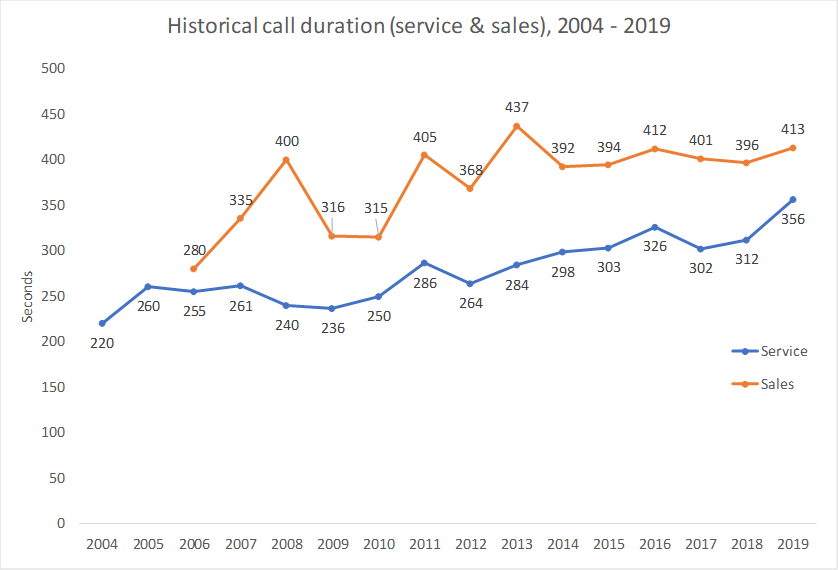

Contact Babel teased some data from their latest UK Contact Centre Decision Maker’s Guide. It’s planned for release in mid November. They report a 62% increase in service call duration since 2004. See chart below.

Why is this so interesting?

The net impact of self-service is to take simpler transactions off the plate of the call center agent. As self-service becomes more effective, the nature of the calls that end up with agents skews toward more and more complex transactions — the edge cases, the tricky issues – and these calls, naturally, take longer.

An increase in average call duration is the best, objective indicator of aggregate improvement in self-service.

Note what this doesn’t tell us:

It doesn’t say which self-service technology is doing the heavy lifting here. Is it just better websites? IVRs? Chatbots? An even combination of them all?

In fact, stepping back one level further: It doesn’t say whether the self-service offerings have themselves gotten better or whether consumers are just more willing to use them (or less willing to make phone calls).

Maybe it just reflects the increase in smartphone penetration over that same 15-year period, which makes self-service options always available?

We can’t really separate out the contribution of any those trends. So the data is a bit unsatisfying in that it can’t tell a contact center which technology to invest in. But it does show that self-serve options, in aggregate, are taking on a growing share of “easy” transactions.

Contrast This With “Catchy” Forecasts

Another downside with this data is that it’s a lagging indicator. That is, it tells us what improvements have already happened. But the real appetite out there is for leading indicators, or even better, forecasts that go out five years.

There’s good reason for that appetite. IT budgets need to be planned out, and the more you can see into the future, the better you can plan.

So, that’s where the analysts come in. They tell us things like “80% of interactions will be automated by 2025.” I find these pronouncements pretty meaningless.

Two big flaws: First, the data comes from surveying call center execs about what they think will happen. The survey participants have no stakes in getting it right. Second, how are they counting “interactions”? I would guess that every company handles that differently. (How many “interactions” is it if I spent an hour browsing on Expedia before booking? But when I call, will they count that as one interaction?)

The forecasts serve a useful role, but everyone who relies on them for planning processes should be keenly aware of their limitations. Always look to whatever hard data you can find first.

Discover the Contact Center Trends That Matter in 2024

Dig into industry trends and discover the changes that matter to your business in the year ahead.